LLM Radar

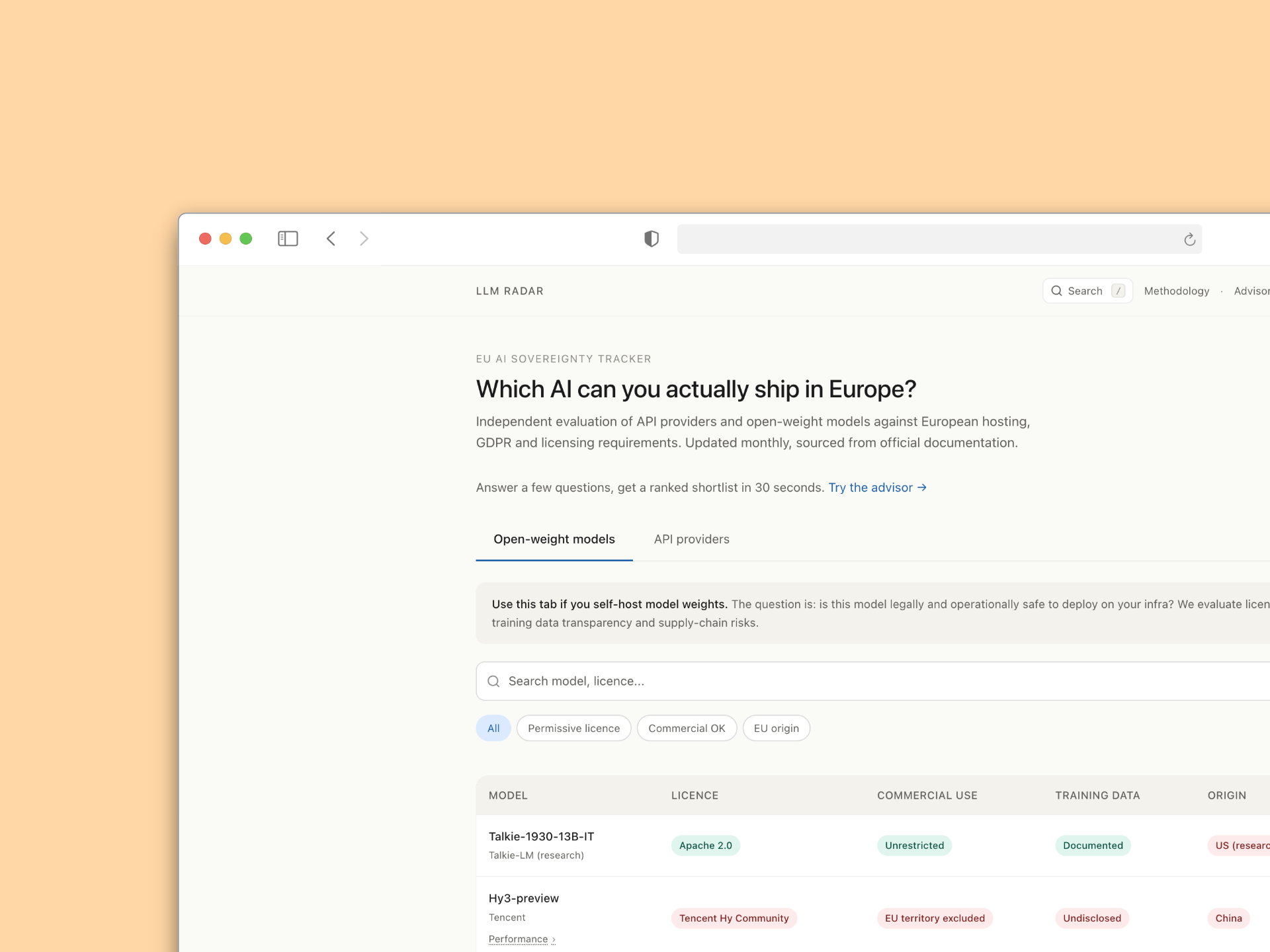

Which AI can you actually ship in Europe? Independent evaluation of API providers and open-weight models against European hosting, GDPR and licensing requirements. Updated monthly, sourced from official documentation.

European CTOs, DPOs and platform leads waste weeks reconciling vendor trust pages, CNIL opinions and legal blog interpretations to figure out something simple: can we deploy this model. I built LLM Radar to compile that signal into one place, so a procurement decision takes minutes instead of a back and forth between three teams.

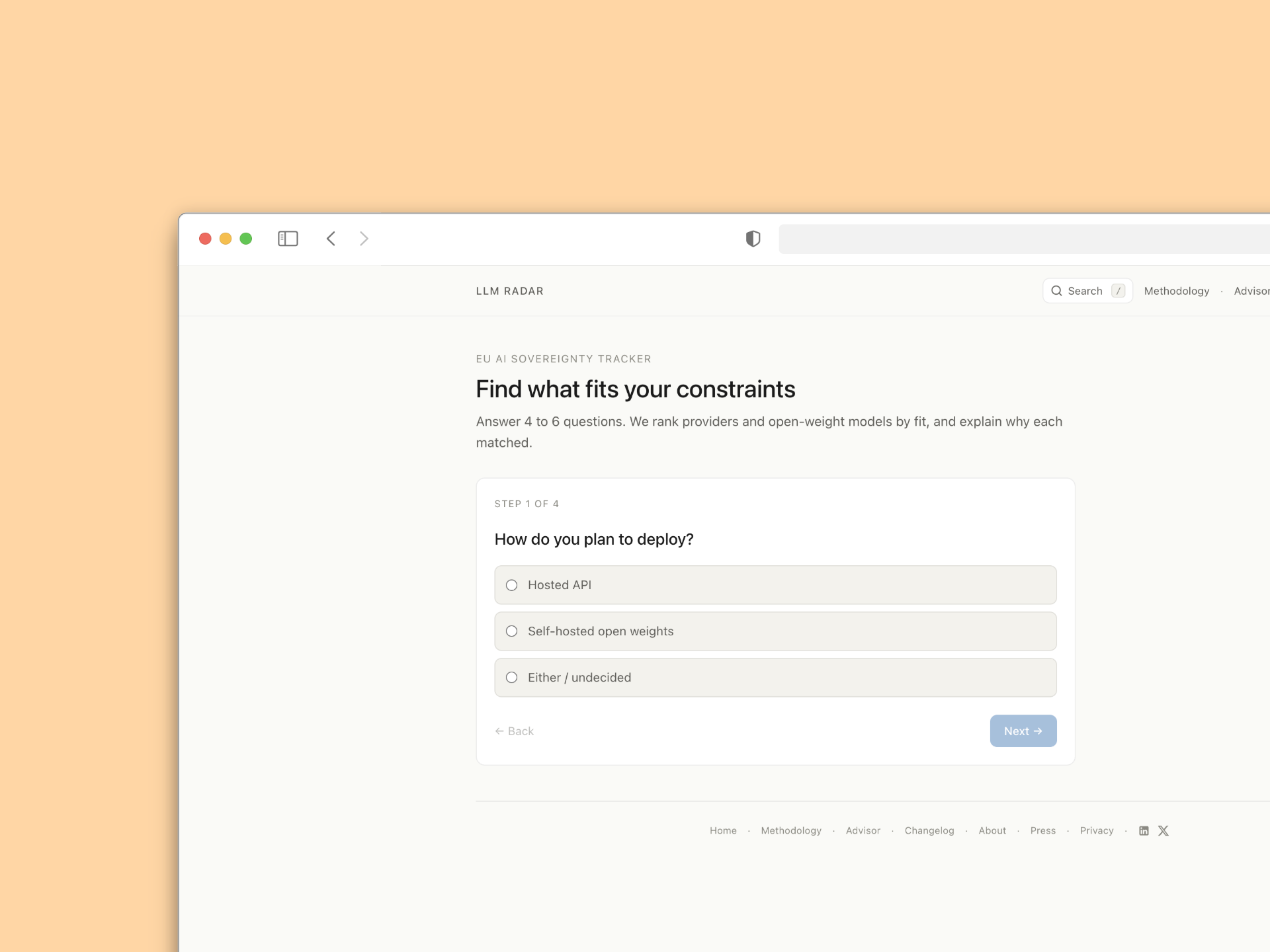

The advisor is the part I am most proud of. Instead of dumping a 47-row table on a stressed product lead, it asks four to six targeted questions about hosting needs, commercial scope and risk tolerance, then returns a ranked shortlist tailored to the team's actual constraints. Every match comes with an explanation of why it fit, so the output is defensible in front of legal.

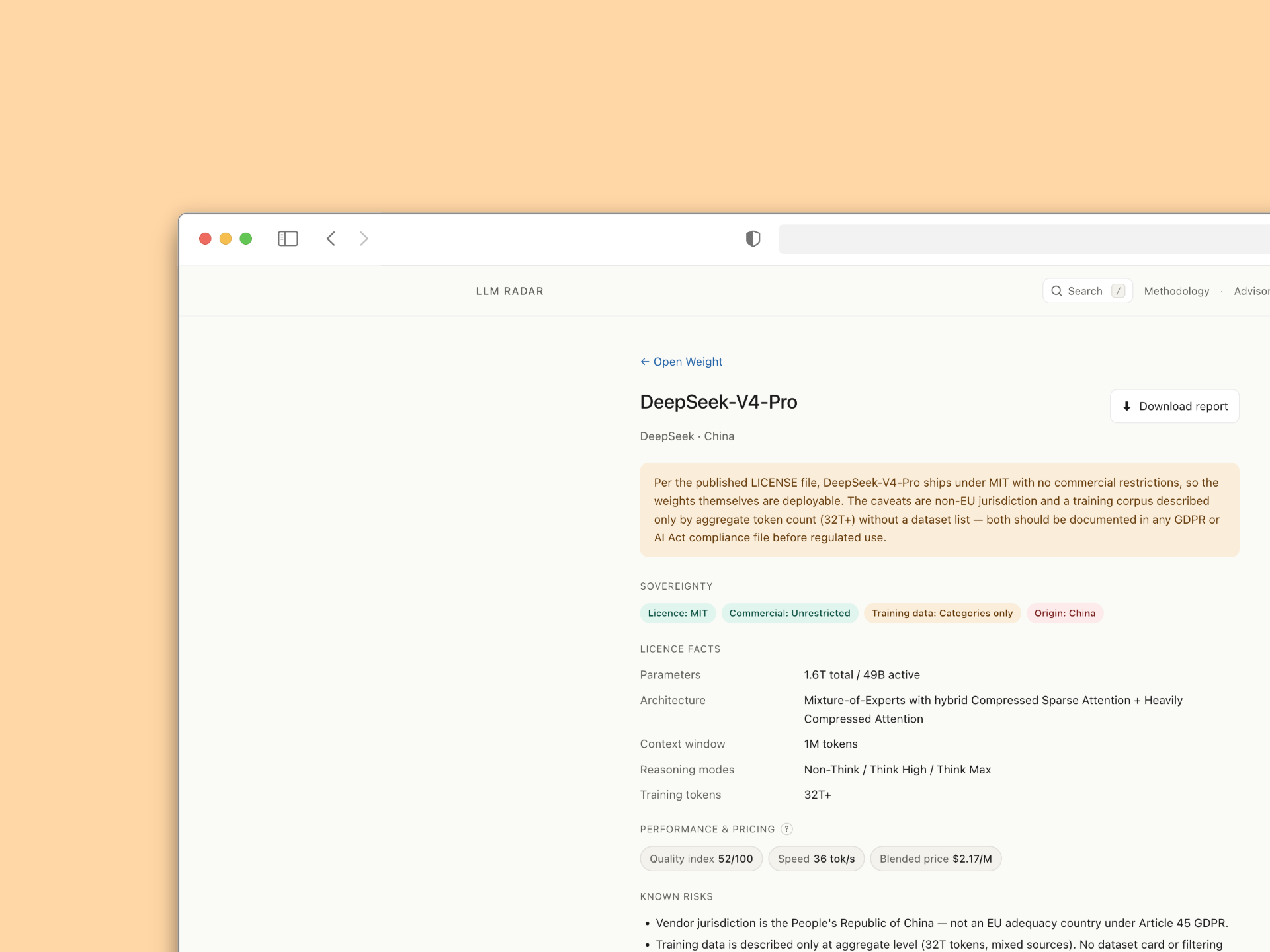

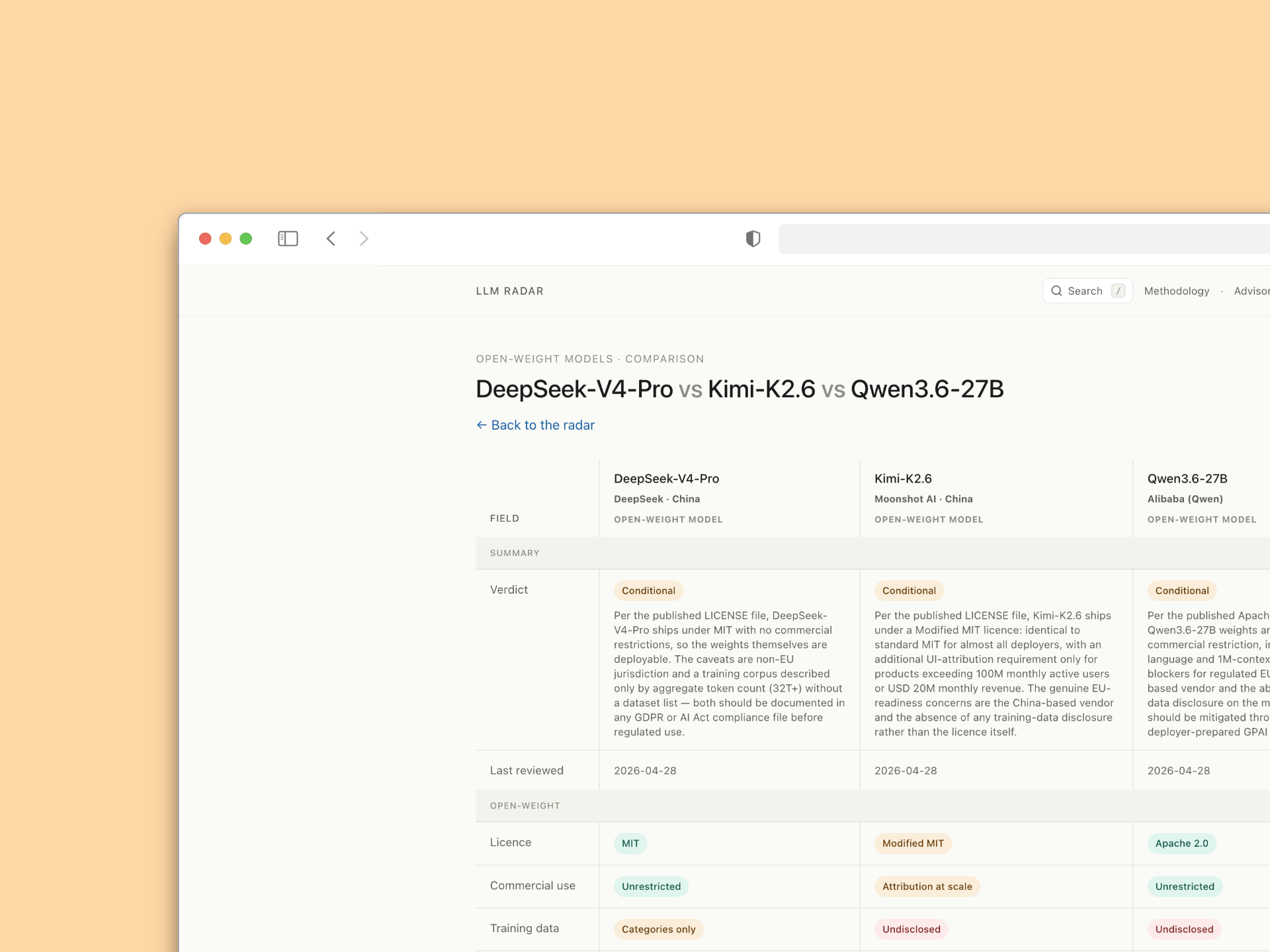

Each entry is structured the same way: a plain-language verdict at the top, the licence and sovereignty facts as tags, then the technical specs, performance and pricing, and a known risks section that calls out exactly what needs to go in a compliance file. The comparison view lets teams put two or three candidates side by side on the same fields, which is how procurement actually works in practice.

Every page is sourced from official documentation, carries the reviewer's name and is dated, so readers always know who signed off and when. The methodology behind every verdict is fully public, applies uniformly across the register and includes a right of reply for vendors who disagree. No sponsorship, no affiliate deals, no synthetic benchmarks. If a number does not inform an AI Act risk assessment, it does not belong on the page.